Indian Journal of Science and Technology

Year: 2021, Volume: 14, Issue: 2, Pages: 141-153

Original Article

T Mythili1*, A Anbarasi2

1Ph.D. Research Scholar, Department of Computer Science, L. R. G. Government Arts College for Women, Tiruppur, Tamilnadu, India

2Assistant Professor, Department of Computer Science, L. R. G. Government Arts College for Women, Tiruppur, Tamilnadu, India

*Corresponding Author

Email: [email protected]

Received Date:26 November 2020, Accepted Date:26 December 2020, Published Date:20 January 2021

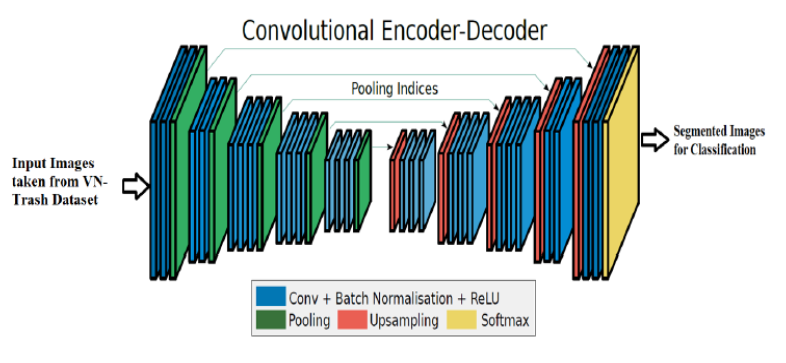

Objective: To maximize the accuracy of classifying the medical wastage, an Enhanced Segmentation Network (EnSegNet) with Deep Neural Network-Trash Classification (EnSegNet-DNN-TC) is proposed in this article. Methods: Initially, a core trainable segmentation network called SegNet framework is proposed which uses the Encoder-Decoder Network (EDN) and a pixel-wise classification layer for image segmentation. The decoder is used to upsample its low-resolution input feature maps via max-pooling. Also, SegNet uses fewer parameters for training. The uncertainty inherent to the EDN is modeled by the Bayesian functions to segment the input images. But, this SegNet can sample a limited amount of pixels in the images. Hence, an EnSegNet is proposed that uses Content-Sensitive Sampling (CSS) to sample more pixels in the data-sparse regions and fewer pixels in data-dense regions. Once the segmentation is completed, the DNN is applied for classifying the wastage using the segmented images. Findings: The experimental results show that the EnSegNet-DNN-TC framework achieves 88% accuracy compared to the DNN-TC for considering 100 images of different categories of biomedical wastes from the trash image dataset.

Keywords: Biomedical wastage classification; deep learning; image segmentation; ResNext; encoder-decoder network

© 2021 Mythili & Anbarasi.This is an open access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited. Published By Indian Society for Education and Environment (iSee)

Subscribe now for latest articles and news.